Anthropic - An AI Concern For All

Segment #799

The development of high-risk AI—technologies with the potential to destabilize civilization—requires a delicate balance between

Moral urgency and pragmatic safeguards. Navigating this landscape involves several hard truths about governance and security:

National Interest vs. Popularity: Governments inevitably prioritize decisions they believe serve the long-term safety of their citizens, even when those choices lack public support.

Developing AI capable of existential threat demands more than ethics; it requires practical fortitude. Leaders must often choose the common good over common opinion, relying on necessary confidentiality to prevent national paralysis.. Regulating high-risk AI requires robust guardrails that transcend public sentiment. To govern effectively and maintain security, states must operate with a degree of discretion and autonomy that polling simply cannot provide.

The History of Anthropic

The co-founders of Anthropic discuss the past, present, and future of Anthropic. From left to right: Chris Olah, Jack Clark, Daniela Amodei, Sam McCandlish, Tom Brown, Dario Amodei, and Jared Kaplan.

The story of Anthropic is one of the most dramatic arcs in the AI industry—a "splinter cell" that grew from a safety-focused research lab into a $380 billion titan currently locked in a high-stakes legal battle with the U.S. government.

The Origin: The Great Schism (2021)

Anthropic was born out of a fundamental disagreement over safety at OpenAI. In late 2020, a group of senior leaders—most notably siblings Dario Amodei (OpenAI’s VP of Research) and Daniela Amodei (VP of Safety and Policy)—grew concerned that OpenAI was becoming too commercial and moving too fast without enough safety guardrails.

In 2021, they left to found Anthropic as a Public Benefit Corporation (PBC). Their goal was to build "steerable, interpretable, and trustworthy" AI. They pioneered a technique called Constitutional AI, where the model is given a literal "constitution" (principles like the UN Declaration of Human Rights) to self-correct its behavior rather than relying solely on human feedback.

The Evolution: The Rise of Claude

While initially seen as the "quiet" alternative to ChatGPT, Anthropic’s Claude series eventually became a powerhouse, particularly for technical and enterprise work.

EraKey Milestone2023Released Claude 1.0 and Claude 2, gaining a reputation for a "warmer" personality and a much larger "context window" (the ability to read entire books at once).2024Released Claude 3, with the "Opus" model briefly overtaking GPT-4 in many benchmarks. Introduced Artifacts, allowing users to see and run code in real-time.2025Launched Claude 4 and Claude Code, an agentic tool that can autonomously write and debug software. This drove a massive revenue surge from $1B to $14B.

Where It Is Now: March 2026

As of today, Anthropic is at a critical crossroads, balancing explosive financial success with an unprecedented political crisis.

Financial Juggernaut: In February 2026, Anthropic closed a $30 billion Series G funding round led by GIC and Coatue, valuing the company at $380 billion. It is currently preparing for one of the largest IPOs in history.

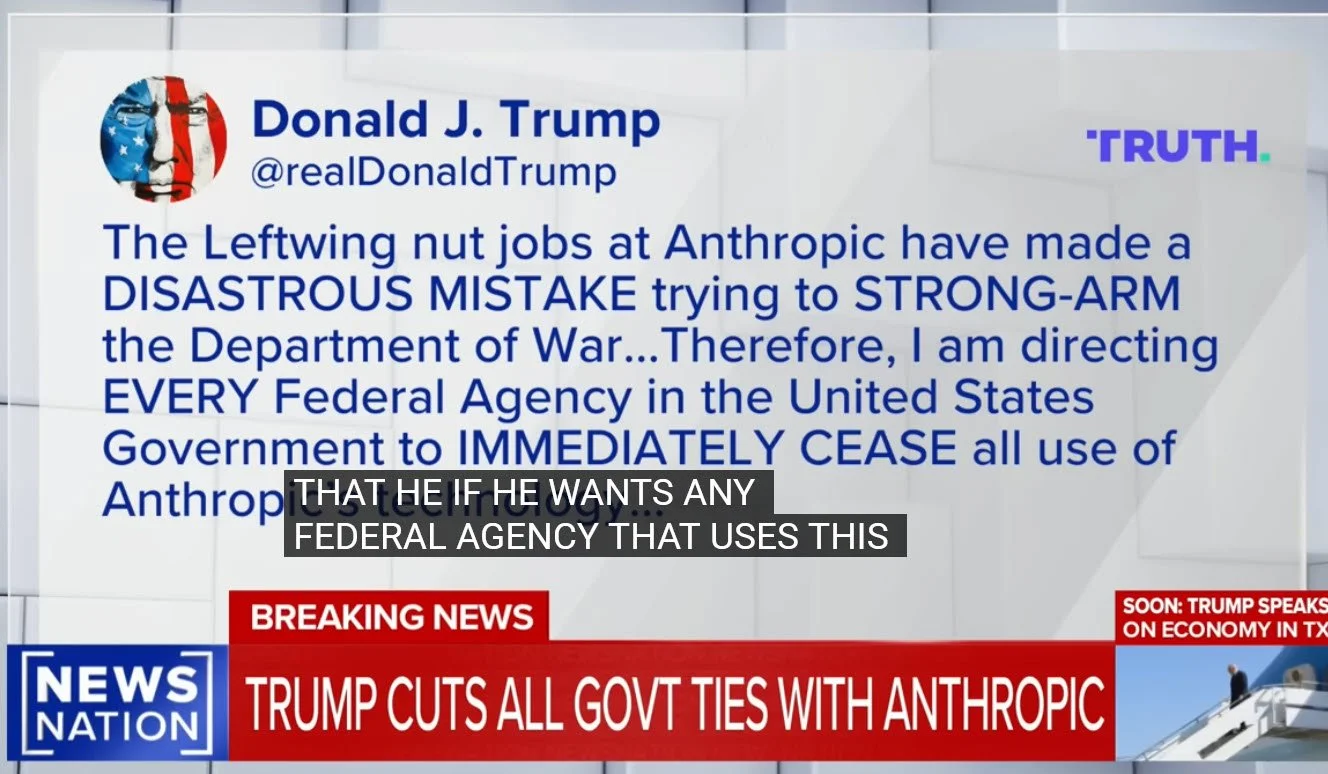

The "Pentagon Standoff": Anthropic is currently in a "war of words" with the U.S. Department of War (formerly the DoD). The government is demanding unrestricted access to Claude for "all lawful uses," including autonomous weaponry. Anthropic has refused, citing its safety "red lines" against mass surveillance and autonomous killing.

Supply Chain Risk: In a shocking move just days ago (late February 2026), the Trump administration designated Anthropic as a "supply chain risk"—a label typically reserved for foreign adversaries like Huawei. This threatens to bar military contractors from using Claude, which currently authors an estimated 4% of all public code on GitHub.

Technological Frontier: The company recently released Claude Opus 4.6, which features "Adaptive Thinking," allowing the model to decide how much "brain power" to spend on a task to save the user money and time.

Summary of Current Status

MetricStatus (March 2026)Valuation$380 BillionFlagship ModelClaude Opus 4.6Annual Revenue$14 Billion (run-rate)Major BackersAmazon ($8B+), Google, MicrosoftKey ConflictLegal battle with the U.S. Gov over AI safety guardrails

Anthropic began as a safe haven for researchers who feared AI. Today, it is a global infrastructure provider that is testing whether a private company can maintain ethical "red lines" when they conflict with national security demands.

Would you like me to dive deeper into the details of the "Constitutional AI" training method or the specifics of the current legal dispute with the Pentagon?

OpenAI CEO Sam Altman announced late Friday that the company had signed a deal with the Pentagon for its AI tools to be used in the military's classified systems, but with seemingly similar guardrails rival Anthropic had also requested.

Morality, the Thread We Cannot Let Go

Carlos Barria/Reuters

2/22/2026|Updated: 2/28/2026

Most people have never heard of Mrinank Sharma. That is part of the problem.

On Feb. 9, 2026, Sharma resigned from Anthropic, one of the most influential artificial intelligence companies in the world. He had led its Safeguards Research Team, the group responsible for ensuring that Anthropic’s AI could not be used to help engineer a biological weapon. His final project was a study of how AI systems distort the way people perceive reality. It was serious, consequential work for humankind. His resignation letter was seen more than 14 million times on X. It opened with the words, “the world is in peril.” And it ended with a poem and by announcing that he was leaving one of the most consequential jobs in artificial intelligence to pursue a poetry degree. Yes, you read that right: peril and poetry.

The poem he quoted is, “The Way It Is,” by the American poet William Stafford. It speaks of a thread that runs through a life—a thread that goes among things that change, but does not change itself. While you hold it, you cannot get lost. Tragedies happen. People suffer and grow old. Time unfolds, and nothing stops it. And the final line: you don’t ever let go of the thread.

Although he didn’t state it explicitly, I argue that that thread is morality. It is the enduring sense that some things are right and some things are wrong—not because a law says so, and not because it is profitable, but because human beings, at their best, have just always known it.

Sharma spent two years watching that thread being let go under pressure, in rooms the public is never shown.

His letter said: “Throughout my time here, I’ve repeatedly seen how hard it is to truly let our values govern our actions.

“I’ve seen this within myself, within the organization, where we constantly face pressures to set aside what matters most, and throughout broader society, too.”

He wrote that humanity is approaching a threshold where “our wisdom must grow in equal measure to our capacity to affect the world, lest we face the consequences.” He wanted to contribute in a way that felt fully in his integrity and to devote himself to what he called “the practice of courageous speech.”

A man who built defenses against bioterrorism concluded that the most important thing he could do next was learn to speak with honesty and courage. That is a major signal about what is happening behind closed doors in AI research and development.

Many experts have compared the development of AI to the development of the atomic bomb. The Manhattan Project was built in total secrecy. The public had no knowledge of it, no voice in how it was used, and no say in what came after. When it was over, some of the scientists who built it spent the rest of their lives in anguish. Several walked away during the project itself.

Sharma was not alone. Numerous safety researchers have walked off AI projects from multiple companies. These departures may be the only signals we, the public, have, because almost everything else about AI development is happening beyond public view. The internal debates, the safety trade-offs, the negotiations over what this technology will and will not be permitted to do—none of it is being shared with the people whose lives it will most profoundly shape. We are not part of this conversation. We are being presented with outcomes and told to adapt.

John Adams wrote that the Constitution was made only for a moral and religious people, and is wholly inadequate for any other. George Washington warned that liberty cannot survive the loss of shared moral principles. The founders studied the collapse of republics throughout history and arrived at the same conclusion: The machinery of freedom requires a moral people to sustain it. Laws and institutions are not enough on their own. They depend on citizens and leaders who hold themselves to something that exists before the law and above it.

That is the thread of human society, and no AI system holds it. If people allow AI to replace the question of right and wrong with the measure of what is legal and permitted, the machine will carry that measure forward at a scale and speed that no previous generation has had to reckon with.

As Sharma ended his resignation letter, “You don’t ever let go of the thread.”

We are at a crossroads not unlike the one the atomic scientists faced.